Kubernetes Cost Optimization Report

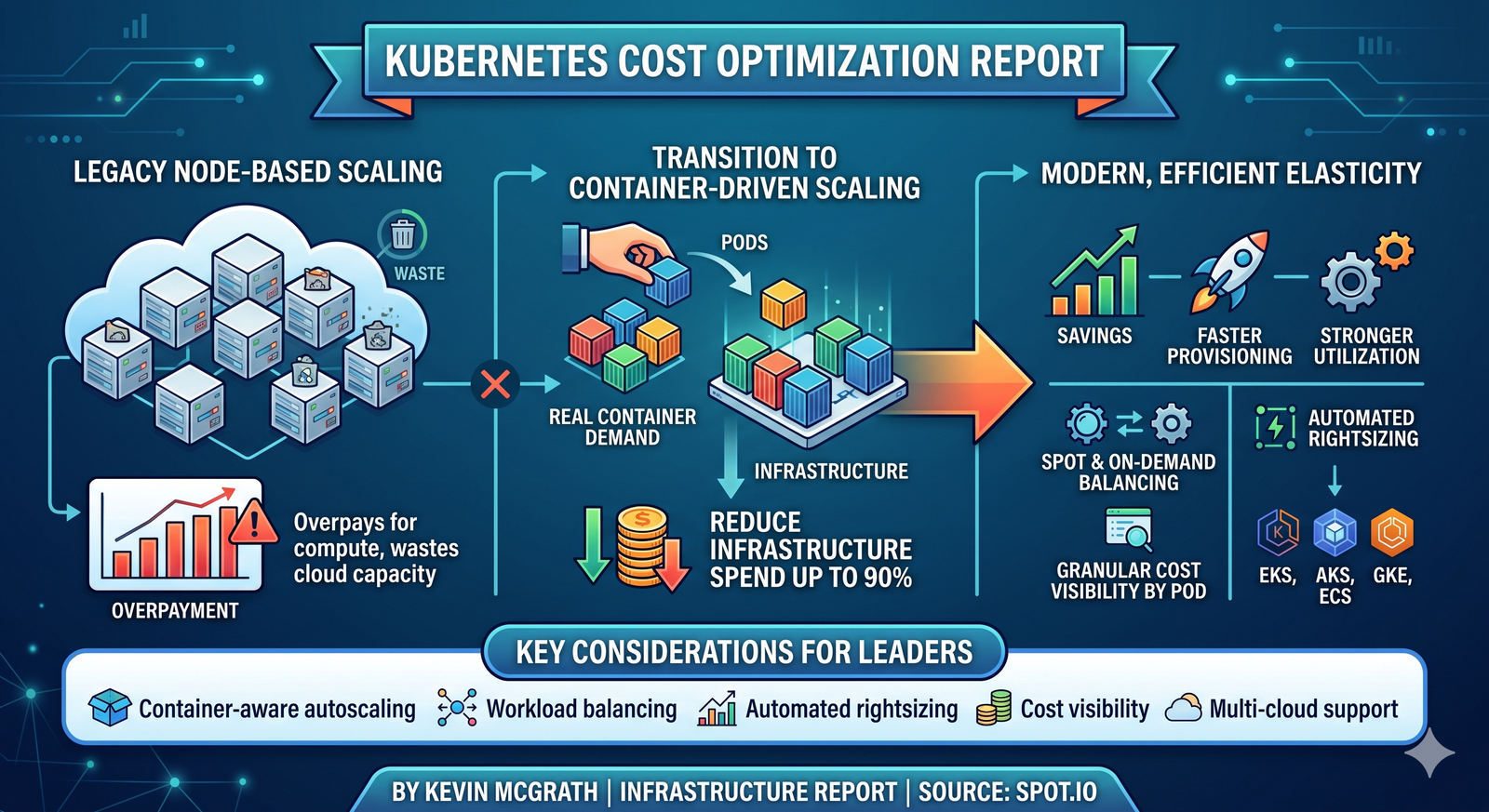

Kubernetes clusters often overpay for compute because node-first scaling ignores real container demand and wastes cloud capacity.

By Kevin McGrath | Infrastructure Report | Source: Spot.io

Early Kubernetes operations focused on cluster uptime, manual node sizing, and basic autoscaling policies.

Now enterprise teams need cost-efficient elasticity, faster provisioning, and stronger utilization across multi-cloud environments.

Container-driven scaling can reduce infrastructure spend by up to 90% while improving workload placement and responsiveness.

Instead of selecting static instances first, modern platforms match compute, memory, storage, and policy needs directly to running pods.

⚠ Enterprises that keep legacy node-based scaling models may face rising cloud waste, delayed application capacity, and budget overruns within the next 12–24 months.

For CIOs and platform leaders, inefficient Kubernetes economics directly impact cloud margins, release velocity, and infrastructure resilience.

- Container-aware autoscaling for real-time demand

- Spot and on-demand workload balancing

- Automated rightsizing across clusters

- Granular cost visibility by pod and service

- Support for EKS, AKS, GKE, and ECS

This approach strengthens governance while turning Kubernetes into a measurable business efficiency engine.

Enterprise Kubernetes Savings Assessment

Identify hidden waste, scaling gaps, and modernization opportunities across your container infrastructure.

✔ Cluster cost exposure analysis

✔ Rightsizing opportunities

✔ Multi-cloud scaling roadmap

✔ Executive savings recommendations

Download Full Report✔ Cluster cost exposure analysis

✔ Rightsizing opportunities

✔ Multi-cloud scaling roadmap

✔ Executive savings recommendations