Kubernetes Cost Optimization Report

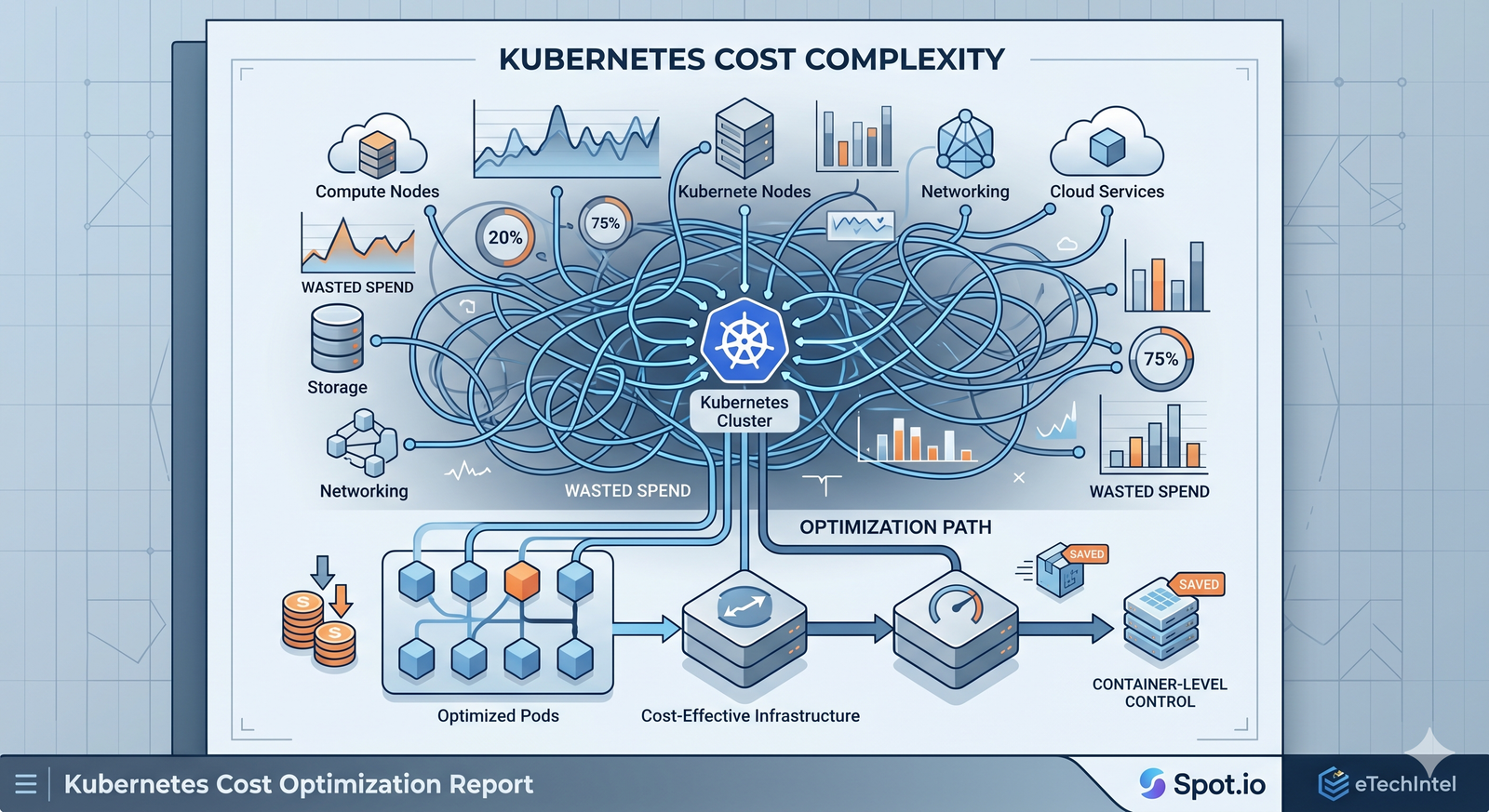

Kubernetes scaling decisions often waste compute spend and delay workloads. Enterprise teams need container-first infrastructure control now.

By Spot.io | Research Report | Source: Spot.io

Early Kubernetes operations focused on node provisioning, instance sizing, and reactive autoscaling thresholds.

Now rising cloud costs and faster release cycles are pushing CTOs to optimize infrastructure at the container level.

Organizations that scale around containers instead of nodes can cut cloud compute costs by up to 90% while improving workload availability.

Container-driven scaling matches real workload demand to the right capacity pool, reducing idle resources and operational overhead.

⚠ Within the next 12–24 months, enterprises relying on static node strategies may face runaway cloud spend, delayed deployments, and reduced application resilience.

For IT leaders, this shift impacts budget forecasting, platform performance, and multi-cloud operating models across critical environments.

- Container-aware autoscaling policies

- Spot and on-demand mix optimization

- Real-time rightsizing recommendations

- Granular cost showback by workload

- Fallback resilience across capacity pools

Modern platforms turn Kubernetes into a more efficient operating model with less manual infrastructure management.

Enterprise Kubernetes Savings Assessment

Benchmark your current cluster efficiency and uncover immediate cost reduction opportunities for enterprise infrastructure teams.

✔ Waste exposure analysis

✔ Scaling maturity score

✔ Cost optimization roadmap

✔ Executive savings insights

Download Full Report✔ Waste exposure analysis

✔ Scaling maturity score

✔ Cost optimization roadmap

✔ Executive savings insights